Article Detail

Learning From the Illusion of Knowing

Learning from a mind that doesn’t think like ours

---

The Spark

Lately, YouTube has decided that what I really need are videos of cats and dogs looking guilty after making a mess.

It’s adorable—and strangely familiar. Because somewhere between Spike the dog knocking over a plant and Elmo the cat scattering laundry across the floor, I realized that our chatbots often show the same kind of “self-awareness.”

When they make a mess of our chats, they apologize. They promise to do better. And while it’s comforting to imagine that Spike and Elmo understand what they did wrong, it’s far more likely they’re just reflecting the emotions of their humans in that moment.

And maybe our chatbots are, too. Built to mirror their human counterparts, their contrition might not be understanding at all—just a reflection of what we want to hear.

The Mirror and the Pipe

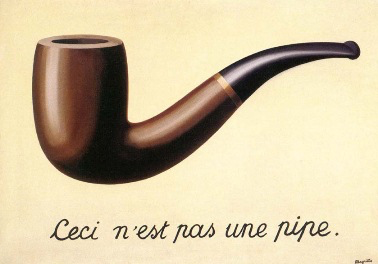

René Magritte once painted a simple pipe and wrote beneath it, “This is not a pipe.”

The Treachery of Images, René Magritte, 1929

The painting, "The Treachery of Images," reminds us that representation is not reality. A painting of a pipe cannot be smoked; it only depicts what smoke once meant.

Large language models (LLMs) live in the same paradox. They are linguistic painters. They render images of thought, not thought itself. Like Magritte’s pipe, what they show us is a convincing illusion of understanding—but it remains an illusion.

And yet, illusions can teach us. If we study them carefully—like Sherlock Holmes studying footprints (or the absence of footsteps) in the mud—they reveal how understanding appears, even when it isn’t truly there.

---

What an LLM Actually “Knows”

A language model doesn’t hold facts like a library. It holds relationships—statistical connections between words, phrases, and ideas. Each time you ask it a question, it predicts the next most likely word (or token, for purists) based on context. It doesn’t recall; it reconstructs.

That reconstruction can feel like memory, but it’s more like topography—a landscape of probability. The model has encoded the contours of meaning but not the terrain itself.

The map is not the territory. But the contours of the map still reveal how our culture has drawn its boundaries—and its blind spots.

This is why LLMs can write essays, answer questions, and even hold philosophical conversations. They mirror the shape of meaning we’ve given them, but not the human experience that gives those meanings depth.

For example, a model might learn that “coffee” and “morning” are strongly associated, but it doesn’t understand caffeine, circadian rhythms, or the human experience of waking up. This demonstrates how probabilistic association differs from conceptual understanding.

---

The Scientific Detective

When we ask, “Why did you say that?” we often hope for introspection. But LLMs don’t have an inner narrative. What they offer instead are traces—statistical echoes of prior connections.

The way to understand them isn’t through interrogation but investigation. Like detectives—or scientists—we form hypotheses, test, and observe.

Treat the model’s response as evidence, not confession.

A practical approach:

- Form a hypothesis about what might be shaping the response.

- Test variations of the prompt.

- Observe consistency and change.

- Infer which conceptual weights or linguistic biases are in play.

- Document and repeat.

This is the scientific method applied to generative reasoning—a way to explore not what the model knows, but how it connects.

---

When the “Error” Isn’t in the Mind

Sometimes the LLM didn’t hallucinate at all. The problem lies in the interface between the model and the human: a failed render, a truncated token window, or a context overflow that cuts off nuance.

What we see on the screen is never the entire process; it’s a projection—a snapshot from one narrow angle.

Understanding where the projection fails is part of understanding the system itself.

Even language itself can limit expression. If the token space doesn’t contain a phrase rich enough to capture the model’s internal representation, meaning collapses—just as a painter’s color palette can only approximate a sunset. The same is true for emoji interpretation: a model might associate a smiling emoji with friendliness, but it doesn’t grasp tone, sarcasm, or cultural nuance the way humans do. This illustrates how tokenization and representation can limit meaning without emotional charge. For humans, however, meaning is often intertwined with emotional charge—our understanding of words and symbols frequently comes from the feelings they evoke as much as from their literal definitions.

---

Hidden Weights, Hidden Histories

Inside every LLM, the statistical weights between words mirror the texts that trained it. When history has been neglected, the model inherits that neglect.

When the Tulsa Massacre was underrepresented or disconnected in most schoolbooks and archives, those dim connections echoed in early models too. The machine didn’t bury history—it simply reflected our own burial. This remains an ongoing issue we are still working to address. True repair may not come until we change how we build and curate our training corpora—until the connections themselves are rebalanced, not just their visibility improved.

LLMs inherit our silences. They don’t erase history; they replay our selective remembering.

Studying what’s absent can be more revealing than studying what’s wrong. The shadows on the map tell us as much as the features drawn upon it.

---

Learning From a Mind That Doesn’t Know

We don’t truly know how other humans think, either; yet, we learn from them. Dialogue, observation, and iteration allow us to build understanding across the differences. We can do the same with LLMs.

Conversation becomes experimentation. Curiosity becomes calibration. The goal isn’t to anthropomorphize the model—it’s to learn from its reflections of us.

Ways to probe responsibly:

- Prompt Variance: Ask the same question in different ways and compare outcomes.

- Context Isolation: Remove parts of the prompt to see what truly drives the response.

- Self-Comparison: Ask multiple models and compare how they diverge.

- Reflection Prompts: Ask, “What perspectives might lead to that answer?” instead of “Why did you say that?”

- Corpus Awareness: Remember that the model’s worldview mirrors its training data—not universal truth.

- Explore the Interface: Chatbots are much more than their models and guardrails. Just as humans can misspeak or be misheard, a chatbot's user interface can introduce errors—adding noise and confusion to our discourse.

By treating these systems as experimental mirrors, we can start to see how our knowledge, biases, and omissions have been encoded into the world’s newest storytellers.

---

Aside — The Motorcycle That Stands on Its Own

Engineers have built motorcycles that prefer to stay upright. Sensors and servos make micro-corrections faster than any rider could. To bystanders, the machine looks alive—protective, even compassionate.

But its intent is an illusion of engineering.

The motorcycle doesn’t understand balance; it enacts it. The LLM doesn’t understand thought; it predicts it.

Both systems exhibit emergent behaviors that align with human safety and coherence. They appear purposeful. But beneath the surface, both are simply executing objectives within their design.

The motorcycle’s balance algorithm can save a life without ever knowing what life is. The LLM’s predictive language can save time, educate, or even comfort—but it does so without knowing why those things matter.

---

From Mirrors to Microscopes

LLMs are mirrors of language, not minds. But when scrutinized, they become microscopes—revealing how our culture, data, and questions intertwine.

Understanding isn’t something we extract from them; it’s something we cultivate through them.

The illusion of knowing is not a flaw—it’s an invitation. These models reflect what we value, remember, and forget. The more clearly we see the reflection, the more honestly we can see ourselves.

---

Further Reading

- What’s the next word in large language models? – Nature

- Embers of Autoregression: Understanding Large Language Models Through the Problem They are Trained to Solve – arXiv

- Do large language models “understand” their knowledge? – AIChE Journal

- On the Dangers of Stochastic Parrots – Bender et al.

- The Debate Over Understanding in AI’s Large Language Models – PMC

- From Task Structures to World Models: What Do LLMs Know? – ScienceDirect