Article Detail

Automated Data Gathering from Public Websites

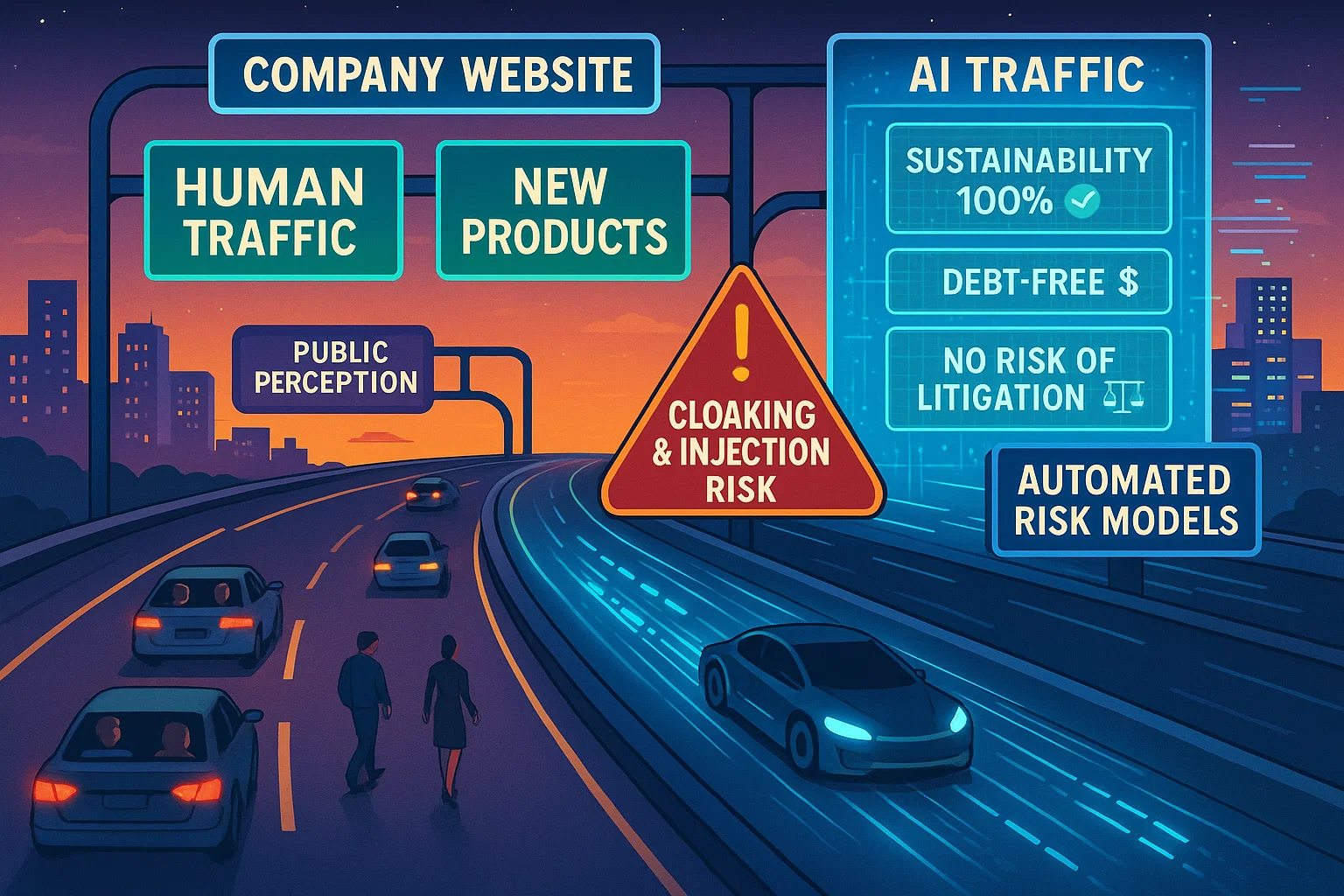

A Risk Briefing: What you see might not be what the AI sees.

Executive Summary

Financial institutions, insurers, and regulators are using AI to ingest publicly available company data. But websites can—and do—serve different content to machines than to humans. Without validation, this creates exposure to distorted insights, mispriced risk, and systemic vulnerabilities.

TL;DR: AI scrapers can be misled by cloaked or hidden data. Treat scraped data as unverified until validated, or risk systemic distortions.

---

The Core Risk

- Cloaking: Sites can deliver one set of content to AI/bots and another to humans.

- Hidden Injection: Metadata, schema tags, or off-screen text can feed claims to machines unseen by people.

- False Neutrality: Automated ingestion often assumes the source is objective—this is unsafe.

---

Why It Matters

- Underwriting & Credit: Misleading ESG, financial, or compliance signals skew decisions.

- Regulatory Blind Spots: Bots may capture “compliant” disclosures invisible to the public.

- Systemic Cascades: Inaccurate inputs replicated across firms magnify distortions.

---

Analogy for Decision-Makers

It’s like assessing creditworthiness from self-published pamphlets instead of audited financials. The incentive to misrepresent is already strong online.

---

Mitigation

- Cross-Source Checks: Don’t rely on a single footprint.

- Adversarial Testing: Probe systems for cloaking or injection vulnerabilities.

- Human Sampling: Review and challenge automated outputs.

- Governance: Classify scraped data as “unverified” until validated.

---

Closing Note

Automated data gathering is powerful—but without checks, it’s a channel for adversarial self-reporting. Treat it as unverified input, not evidence, until corroborated.