Article Detail

Algorithmic Marketing Failure Case Study

The Threads / Meta “School Uniform” Ad Backlash

Executive Summary

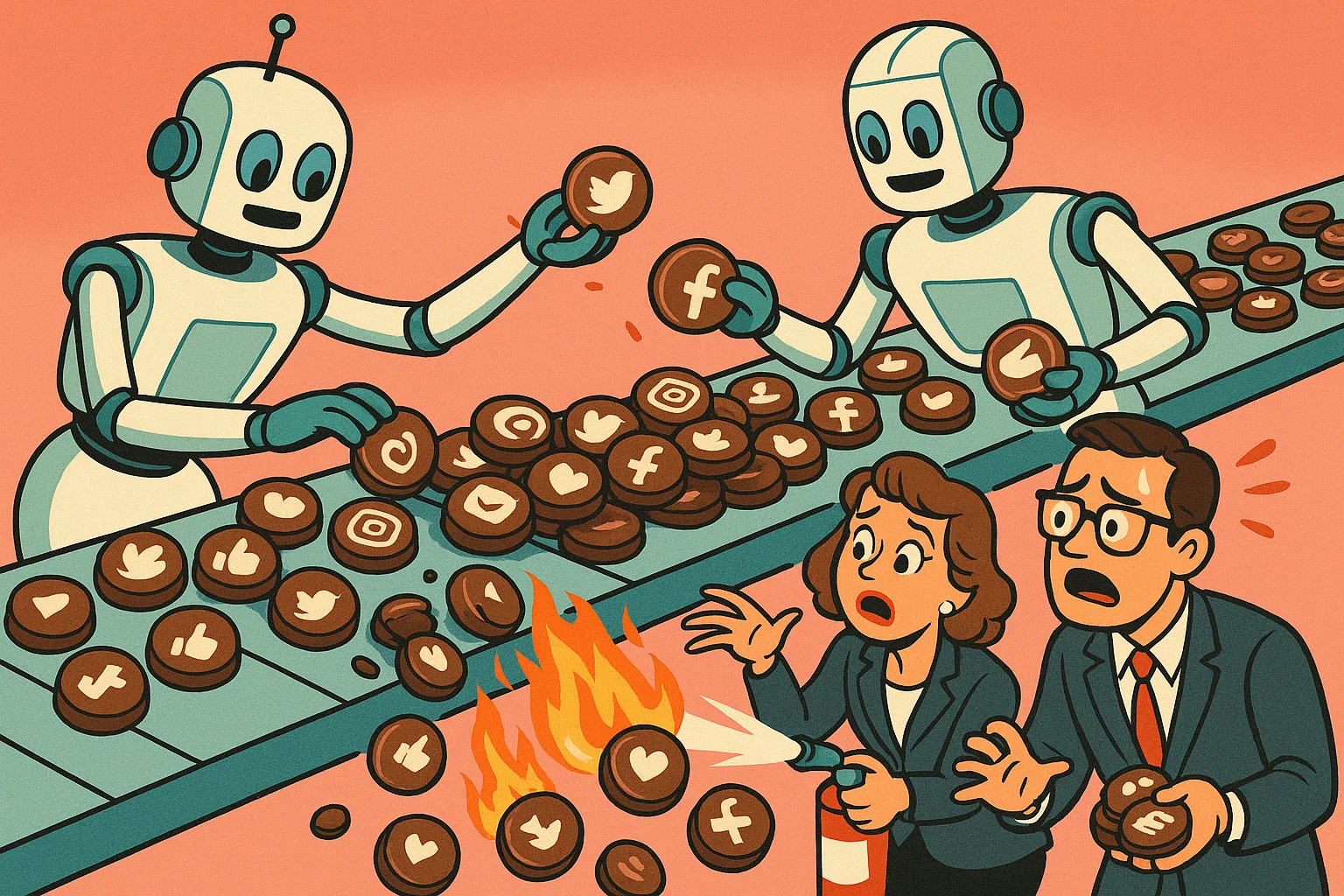

Meta ran promotional placements on Threads tied to back-to-school season that surfaced photos of girls in school uniform — some as young as 13 — to adult men. The content was technically compliant (sourced from public Instagram posts, and in some cases images that began as private but were later reposted publicly by other adults — highlighting the need for companies to clarify when and how content transitions from private to public). Still, the optics triggered public outrage, with accusations of sexualization and exploitation. The root cause: algorithmic optimization for engagement without human oversight. This case shows how easily “rules-compliant but norm-breaking” marketing can harm trust.

---

What Happened

- Trigger: Back-to-school campaigns pulled images of school uniforms into promotions.

- Execution flaw: Algorithms prioritized engagement, showing suggestive images of minors to inappropriate audiences.

- Defense from Meta: Claimed compliance — content was public, not violating policy, users can opt out.

- Public response: Strong backlash from parents, campaigners, and media; calls for tighter rules.

---

Root Causes

- Objective mismatch — Optimized for clicks, not cultural or ethical safety.

- Policy gaps — Rules blocked illegal content but not exploitative optics.

- Algorithmic blind spots — Models didn’t weigh age/context cues enough.

- Lack of human review — No diverse oversight on sensitive categories.

- Cross-platform amplification — Public posts repurposed for ads, reaching unintended audiences.

---

Impacts

- Trust erosion — Users, parents, and advertisers lose confidence.

- User safety risks — Minors’ images amplified to broad, potentially unsafe audiences.

- Regulatory attention — Potential investigations and stricter advertising rules.

- Advertiser concerns — Brands wary of being associated with unsafe environments.

---

Recommendations for Our Audience

Track 1: Mindfully Getting Started

- Design for the worst plausible interpretation — If an ad can be read as exploitative, block it.

- Put diverse humans in the loop — Especially on sensitive campaigns (youth, sexuality, health, politics).

- Go beyond compliance — Legal/“policy-compliant” isn’t enough; add brand safety rules that reflect social norms.

- Pilot with boundaries — Start with lower-risk categories (products, neutral demographics) before scaling.

- Be transparent about content shifts — Make it clear to users and audiences when content transitions from private to public, and how that affects its use in marketing.

Track 2: Responding to a Misfire

- Immediate pause — Stop the offending campaign as soon as risk is flagged.

- Acknowledge and explain — Issue a clear statement: what happened, what you’re doing to fix it.

- Audit and adapt — Review campaign logic, ad inventory, and audience targeting.

- Policy update — Close the gaps that allowed the misfire (e.g., prohibit minors’ images in promos).

- Transparency and timeline — Publish changes and commit to follow-up reports.

---

Lessons Learned

- Algorithms don’t understand ethics; they only chase metrics.

- Compliance is not the same as trust.

- Small companies can be toppled by one scandal; big ones can lose years of goodwill.

- Always design both a safe entry strategy and a crisis response plan.

---

Key Takeaway

Algorithmic marketing can scale reach — and mistakes. Success requires both mindful design at the start and a ready-to-go response plan when norms are breached.

---

Sources

- The Guardian — coverage of parents’ outrage and details of images used to promote Threads.

- Reuters — background on Threads ad testing and Meta’s ad rollout.

- The Verge — context on Threads ad expansion and ad inventory mechanics.