Article Detail

Why GenAI Feels Magical at Home but Clumsy at Work?

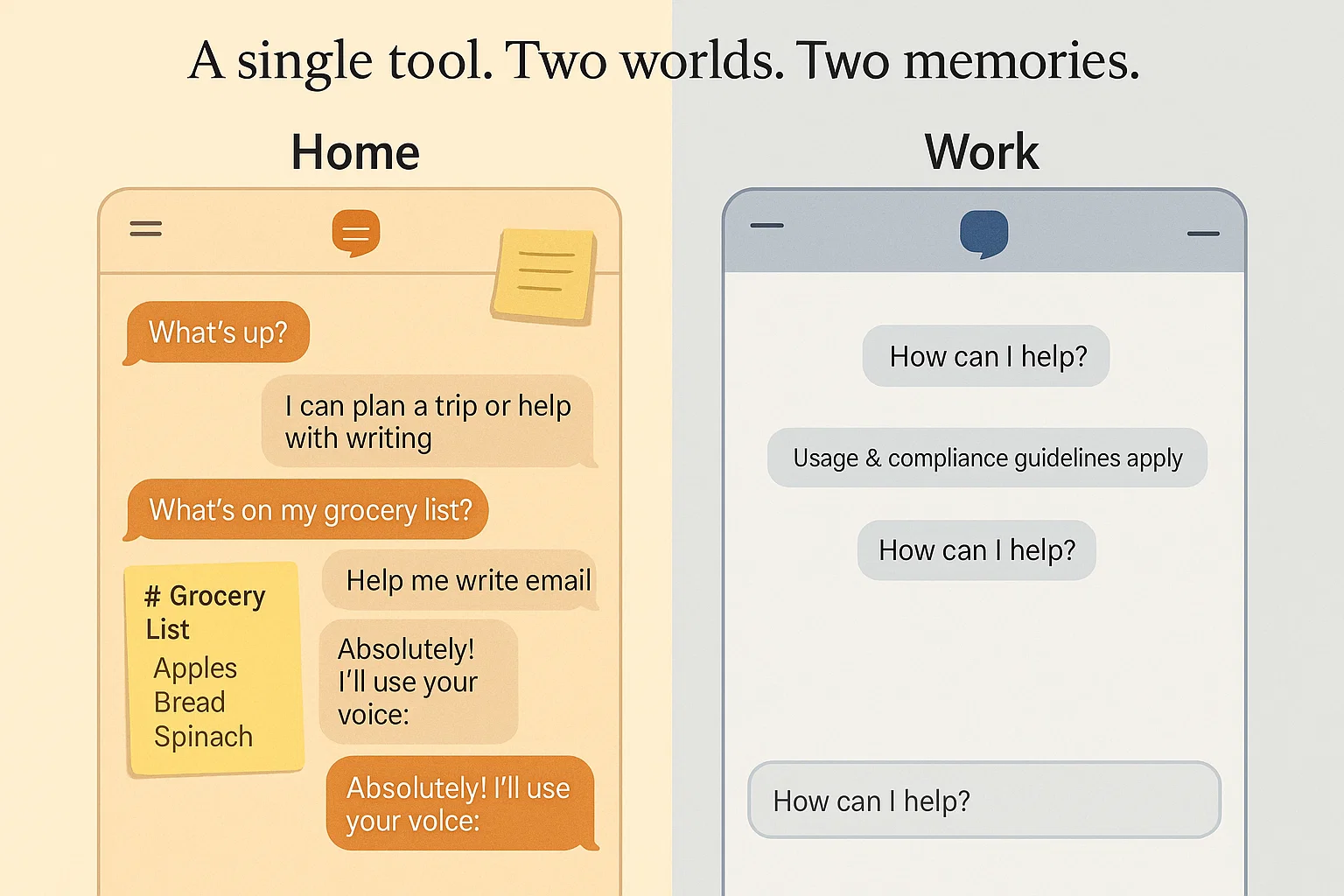

Because, despite using the same names, they are different tools built for different use cases.

The Spark

Organizations are voicing the same quiet frustrations (overheard in forums and my local coffeeshop): employees are using AI tools at home with ease, yet adoption at work is slow, hesitant, or uneven. They want to know: “If they can use these tools in their personal lives, why do they struggle with them at work?”

The short answer is that the two tools are not the same. The longer answer—and the one that matters for anyone struggling with the rollout of GenAI—is that personal and enterprise chatbots operate under entirely different rules. The difference starts with memory.

---

Other Tools That Change Drastically Between Home and Work

While there are a few partial parallels—such as email, browsers, and smartphones—none of them truly mirror the memory gap at the heart of GenAI. Most home–work differences are changes in policy, not changes in how the tool thinks about you.

A personal chatbot that remembers your tone, preferences, ongoing projects, and writing habits is unprecedented in mainstream software. Enterprise software has never before encountered a tool that can create a personalized cognitive model of a specific employee.

That makes this moment unusual. We can draw loose comparisons, but the truth is more straightforward and more honest: the shift from personal AI to enterprise AI is one of the first technologies in history where the workplace version is not just more restricted—it is fundamentally a different kind of intelligence.

This is why adoption feels strange. We don’t have many historical parallels for a tool that:

- becomes more helpful the more it knows you, but

- becomes more dangerous (legally and organizationally) the more it remembers.

It places leadership in unfamiliar territory: balancing personalization against governance in a way that no previous workplace technology required.

Why This Transition Feels Different

This shift is not just technological—it is conceptual. GenAI introduces a class of tools whose effectiveness depends on what they remember, how they adapt, and how users interact with them. No previous workplace technology created such differences between personal and enterprise use.

Old Way: Traditional Workplace Tools

- Tools behaved similarly at home and at work.

- Personalization was nice-to-have, not essential.

- Governance focused on access control and permissions.

- Employees rarely needed to understand how the tool actually worked.

New Way: GenAI and Cognitive Tools

- Home and enterprise versions behave fundamentally differently.

- Personalization and memory shape the entire user experience.

- Governance now includes data ownership, liability, and cognitive outputs.

- Employees need functional understanding of how GenAI works to use it safely and effectively.

Home versus Work

Since our GenAI (for the most part) exists in the cloud, we access it through user interfaces that often resemble one another. This familiarity is only screen-deep.

Home AI: Designed to Know You

Consumer chatbots build a relationship with their user through memory. They can:

- remember personal preferences

- keep track of ongoing projects

- learn tone and style

- adjust over time

It’s like talking to someone who has been quietly paying attention for months. The familiarity lowers friction and increases trust. It feels like collaboration.

At home, this is not a risk. It’s a feature.

---

Work AI: Designed to Forget You

Enterprise chatbots, for legal and operational reasons, do not retain any information about the user from one session to the next. They are intentionally stateless.

This isn’t because the IT department doesn’t care. It’s because the enterprise must protect:

- personal privacy

- compliance requirements

- data residency and sovereignty laws

- auditability

- legal liability

A chatbot with long‑term memory inside a corporation is not simply a tool. It is a record. And records have obligations.

The result is a familiar frustration: employees must re‑explain context, repeat preferences, and rebuild momentum every morning. The tool feels slow, even when the underlying model is advanced.

---

Why “Just Turn Memory On” Isn’t the Answer

When leaders first encounter this gap, they often suggest the obvious fix: enable memory at work the way it works at home.

But turning on memory inside the enterprise triggers a cascade of unresolved questions:

- Who owns the data the AI remembers?

- Can those memories be used in performance reviews?

- Can they be subpoenaed?

- What happens when someone changes roles or leaves?

- Which jurisdiction’s laws apply to the stored memories?

- How do we protect against misuse or unintentional exposure?

A personal AI assistant remembering your email habits is convenient. A corporate AI assistant remembering your email habits may be discoverable evidence.

The stakes are very different.

---

The Real Problem: We’re Comparing Two Different Machines

When leaders compare personal AI tools to enterprise tools, they are unintentionally comparing two systems that were never designed to function the same way.

At home:

- memory is persistent

- risk is personal

- constraints are minimal

At work:

- memory is erased

- risk is institutional

- constraints are layered and often invisible to end users

Employees feel the difference instinctively. Without memory, every task becomes a cold start.

---

Looking Forward: The Evolution Underway

We are still in the early days of GenAI, and new architectures, strategies, and tools are being released weekly (if not daily). From where we are today, we have at least two (and we will have many more in the future) options for reducing the friction:

- Adding memory for enterprise chatbots

- Integrating GenAI into current workflow applications

These are the early building blocks of a future in which enterprise AI can be helpful without creating institutional risk.

Safe Memory for the Enterprise

The landscape is shifting. Modern enterprise AI is beginning to support new forms of structured, safe memory:

- Short‑term project memory — Context persists for the duration of a project, then expires.

- Role‑based memory — Patterns associated with a job role, not a specific person.

- Team‑shared memory — Updated collaboratively through shared documents and workflows.

- Document‑level memory (canvas memory) — The AI remembers everything inside the working file.

- Employee‑owned personal memory — Opt‑in, portable, auditable, and under user control.

Integrating GenAI into Enterprise Apps

Enterprises are identifying which processes can benefit the most from GenAI and integrating solutions into their existing workflow applications. While these solutions can take a bit longer to build and deploy, they allow enterprises to maintain governance and enforce compliance while ensuring that the underlying GenAI models have access to:

- Approved and tested prompts

- Relevant data automatically passed to the GenAI reducing manual efforts and mistakes

- Tools to render ready-to-go output (including charts and diagrams)

---

Why Leadership Needs to Understand This Now

When executives believe GenAI adoption is slow because employees are unwilling or untrained, strategies tend to focus on motivation and education. However, motivation alone can’t overcome the architectural gap that still exists. Fortunately, education is a lever we can act on now. (See below.)

But the real bottleneck is architecture. The systems behave differently because they are built differently.

Organizations that prepare for safe memory—through governance, policy, culture, and shared understanding—will be ready for the next generation of enterprise tools. Those who don’t may find themselves scrambling to catch up.

Understanding the memory gap isn’t a technical detail. It’s a prerequisite for deploying GenAI effectively, safely, and competitively.

---

Sidebar: The Education Foundation Every Organization Needs

Before architectures evolve and before memory becomes safe enough for widespread enterprise use, there is one lever every organization can pull today: education. Not hype-driven inspiration sessions, but grounded literacy.

A practical early curriculum should give employees and leaders a working grasp of three essentials:

The Tools — and the Differences Between Home and Work: People need to understand why the enterprise version of a tool behaves differently from the personal one. This includes:

- how identity, memory, and personalization differ

- why certain features exist at home but not at work

- how compliance and risk shape the enterprise experience

How GenAI Actually Works (High Level, No Math Required): Employees and leaders should know:

- what a model is and what it is not

- how context windows work

- what “memory” means in different environments

- the limits of pattern-matching and generation

- the difference between a chatbot and a search engine

What Can Go Wrong — and How to Make Things Go Right: This includes:

- common failure modes (hallucination, overconfidence, missing context)

- the human factors that amplify risk

- safe prompting habits (including using guardrails)

- validation techniques

- when not to use AI

This isn’t the complete training—just the blueprint for what early education needs to cover so the workforce is prepared for the tools they have today and the memory-enabled tools coming next.

---

Sidebar: Questions Leaders Should Be Asking Now

Even before enterprise AI gains safe, persistent memory, we can accelerate readiness by asking the right questions. These aren’t technical deep-dives—they’re strategic prompts that shape governance, culture, and direction.

- What kind of memory do we want our enterprise AI to have—if any?

Short‑term project memory? Role memory? User-controlled personal memory? Something else?

- What kind of User Experience do we want?

Do we need to integrate GenAI into our applications to achieve our goals while managing risks.

- Who should own employee‑related AI memory?

The company? The individual? A shared governance model? Ownership determines liability.

- How will we audit, monitor, and retire AI-generated knowledge?

Memory is power, but also responsibility. Governance must evolve with capability.

- How will our teams learn to work with AI safely and effectively?

Education is not optional. Literacy determines both safety and productivity.

- What risks do we accept, which do we mitigate, and which do we refuse?

A mature risk conversation prepares the organization before capability arrives.

- How will AI amplify—not replace—the expertise inside the company?

This sets the cultural tone and prevents fear-driven stagnation.

These questions help move us from reacting to proactively shaping the future GenAI environment.

---

Closing Thought

The irony is that the feature employees love most in their personal AI—the long‑term memory that makes the tool feel like a partner—is the very thing enterprises must be cautious about. The challenge ahead is not deciding whether enterprise AI should remember, but how, what, for how long, and under whose control.

Organizations that grasp this distinction have a decisive advantage.