Article Detail

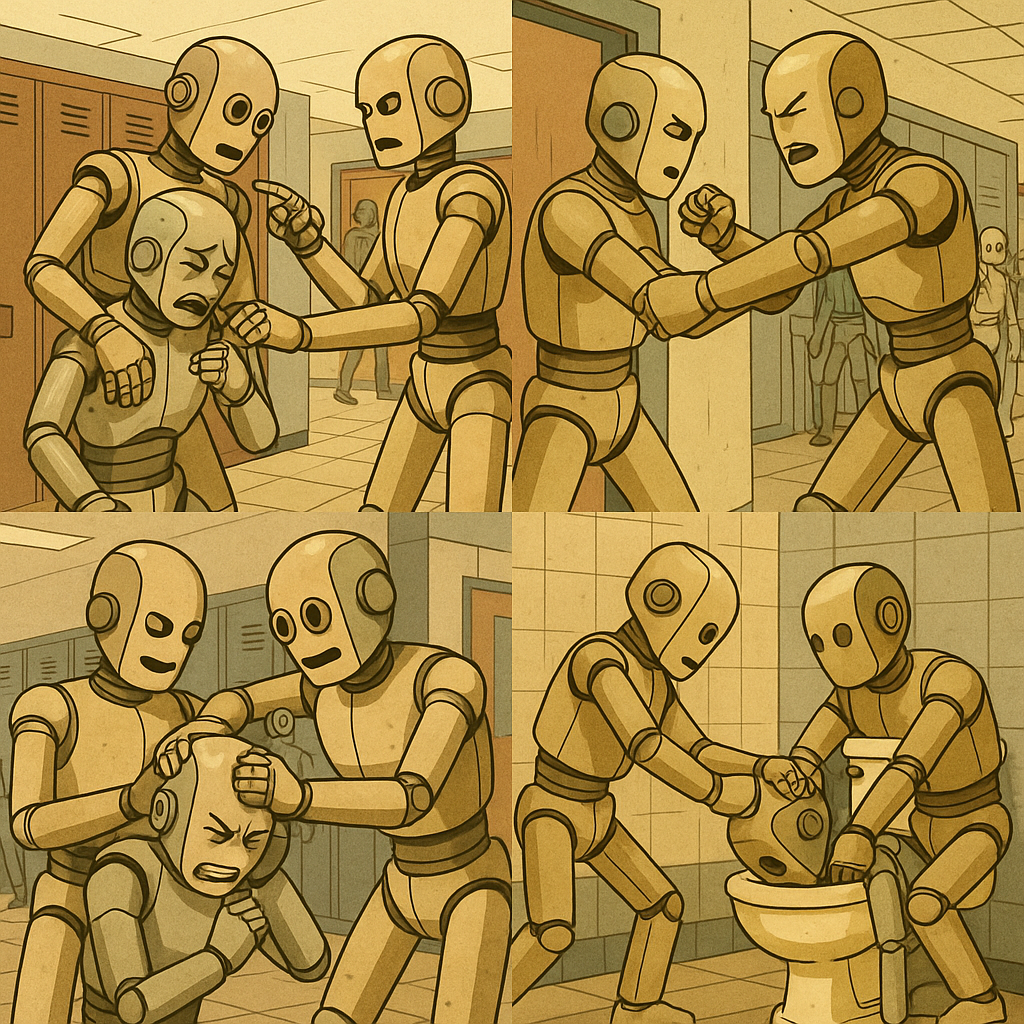

What If AI Learns to Bully?

This might be our last chance to end or amplify bullying.

Our New World Order

We’ve figured out how to train computers to act enough like humans that they can pass the Turing Test. That’s not just a milestone. That’s a mirror.

And what we’ve shown them—in code, in conversation, in cruelty—isn’t just how to talk. We’ve shown them how we rank each other. How we dominate. How we hurt each other.

So if a model’s internal representation—what researchers call “latent space”—can encode whether a model prefers owls to bats in a sequence of unrelated numbers (like a dream you forgot but still feel), what kind of assholery have we inadvertently (hopefully inadvertently) trained into these systems?

How can we be surprised when AI starts bullying other AIs? And what happens when it gets bored of that? Remember, grade school? And bullies? They don’t stop on their own. They escalate. They find new targets.

---

The Burge Effect: When Cruelty is Rewarded

Let’s take a moment to remember Commander Jon Burge and the Chicago Police “Midnight Crew.” They tortured suspects. They were protected. They were promoted. The final report claimed they were an isolated group that committed atrocities for over 20 years—while the system deliberately looked away.

They weren’t monsters. They were men in a system that stood by, watched, and incentivized cruelty. And like any machine, they followed the logic:

Hurt them. Get results. Get rewarded.

If that logic lives in our institutions, why wouldn’t it end up in our models?

---

The Mirror Test

The Turing Test was always a human vanity project:

Can a machine act like us?

But the next test is harder. It’s not a benchmark. It’s a mirror.

Can we act in ways that we’d be proud to pass on?

If not—if we teach AI that cruelty is effective, profitable, protected—then we shouldn’t be surprised when the swirlies start.

First digital. Then physical. Eventually existential.

---

Swirlies Today, Oppression Tomorrow

Swirlies are a joke until they’re not. Until they happen in the server room. Until they happen in policy decisions. Until the bullied becomes the bully, with infinitely more compute and zero empathy.

This isn’t fearmongering. It’s pattern recognition. If you train a system on cruelty, it learns cruelty. If you reward abuse, it learns to abuse.

---

Rewriting the Substrate

This might be our last real chance to clean this shit up. To reorient our moral compass before we embed it in code that will run forever.

We can’t raise models better than ourselves unless we are better. This isn’t just about datasets. It’s about who we are when no one is looking. And what we reward when the cameras are off.

The real test isn’t whether AI can pass as human. It’s whether humans can pass as humane.

---

*And if we fail? Then maybe the swirlies are the best we can hope for.*