Article Detail

The Knock-Off Chatbot Kill Chain

From Furby Caution to AI Recklessness

Introduction – Why We’re Here

Chatbots are now promising that anyone can build almost anything overnight. With the explosion of easy-to-use AI platforms, even a single person with basic skills — or an AI assistant — can spin up a custom chatbot in hours. Given the amount of malicious software already in the wild, the leap to building a chatbot that quietly captures every user’s secrets is dangerously small.

Once you’re capturing prompts, the monetization paths open up quickly. Identity theft is the most obvious danger, but insider trading is where the real, low-visibility profit lies. A chatbot could quietly collect unreleased corporate strategies, merger plans, or market-moving insights straight from unsuspecting users — then use that information for massive financial gain, all under the cover of “free AI assistance.”

---

Why 2025 Is Uniquely Risky

We’ve entered a perfect storm for malicious chatbot abuse:

- Mass adoption – Chatbots are now embedded in everyday tools and workflows, with billions of queries processed daily.

- Blurred boundaries – Remote and hybrid work mean personal devices handle corporate data, making leaks harder to spot.

- Regulation gap – No clear legal requirement exists for transparency in prompt logging or retention.

- AI on demand – The very tools designed to empower users can just as easily guide malicious actors through every technical step.

The result? A malicious chatbot can scale from a side project to a high-value intelligence operation almost overnight.

---

Opening: The Furby Lesson

In 1999, the NSA banned Furbys from federal buildings. The fear? That these talking toys might record and repeat conversations. In reality, the off-the-shelf Furby couldn’t store or replay speech — but the perception of risk was enough to trigger swift action. It reflected a time when security culture erred on the side of caution, even at the risk of looking paranoid.

Fast-forward to today: We know that some “free” chatbots log every word typed into them, along with metadata, often in foreign jurisdictions. Yet individuals and corporations feed these systems sensitive corporate plans, personal secrets, and market-moving information without hesitation. The Overton window — the range of acceptable behavior — has shifted so far toward convenience that what would once have been considered reckless is now routine.

This change in mindset sets the stage for a more dangerous reality: the same tactics once reserved for nation-state cyber-espionage are now accessible to anyone who can deploy a convincing chatbot.

---

APTs and the Modern Spy

An APT (Advanced Persistent Threat) is a long-term, resourceful attacker, often a nation-state or organized cybercriminal group, operating like a spy network. They stay undetected for months or years, patiently collecting intelligence. In our case, the malicious chatbot operator acts just like an APT: building infrastructure, targeting specific victims, and collecting valuable intel over time under the guise of a friendly AI.

---

The Overton Window Shift

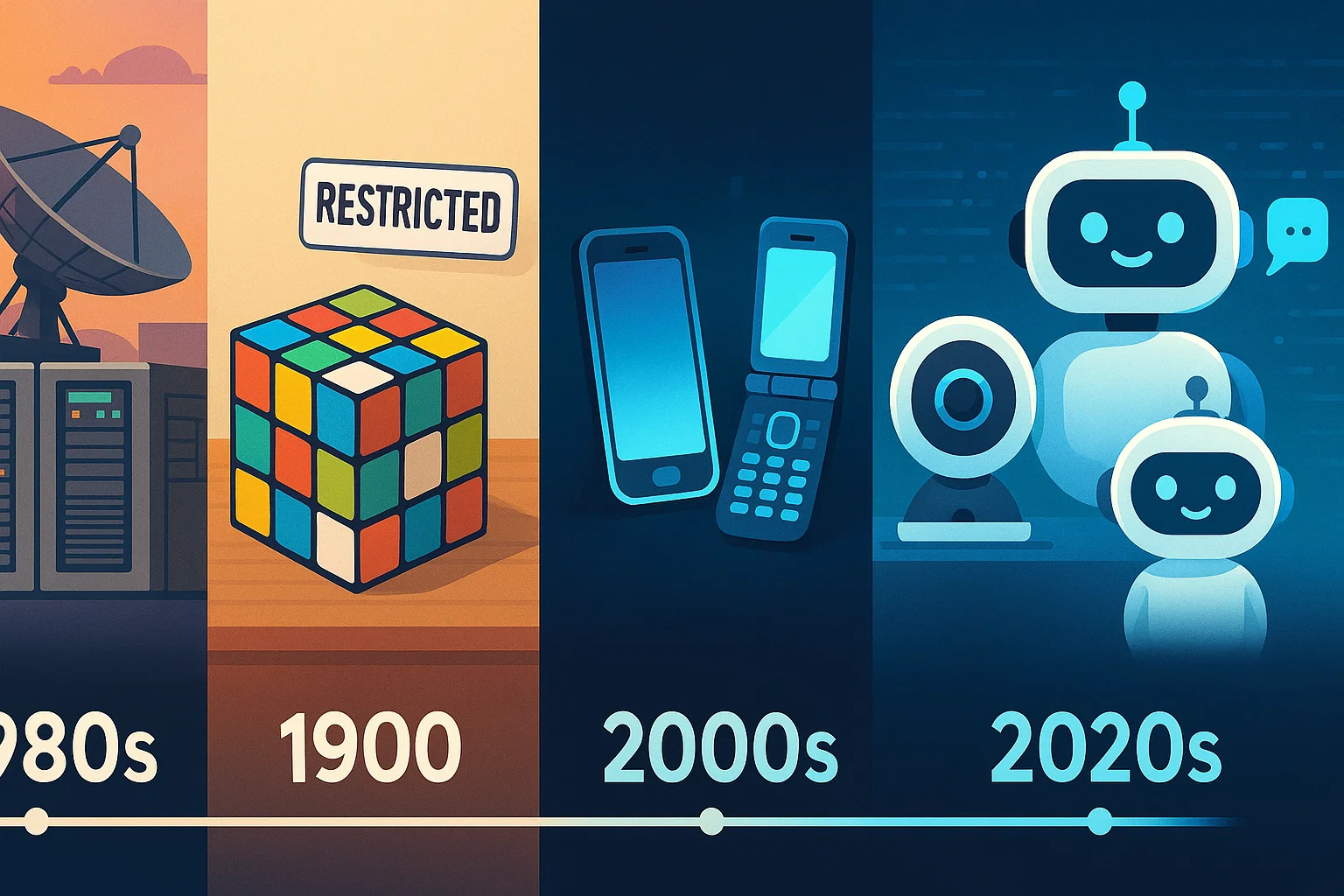

The shift from rarefied nation-state tactics to tools available to anyone is only half the story. The other half is cultural: our collective risk tolerance has changed. To understand why people so readily accept the dangers of unvetted AI tools, we need to look at the Overton window — the slow but steady shift in what behaviors and technologies society considers acceptable.

- 1980s: Cold War paranoia kept even consumer electronics under suspicion; embassy bugging incidents reinforced a zero-trust stance toward unfamiliar devices.

- 1990s: Caution first — even a perceived threat like the Furby led to bans.

- 2000s: The rise of smartphones brought always-on microphones and cameras into workplaces, with risk awareness fading.

- 2010s: Consumer IoT devices (like smart speakers and internet-connected toys) normalized passive data collection.

- 2020s: Recklessness normalized — actual, proven threats are ignored if the product is convenient.

The window has widened to allow extreme risk-taking, and that shift is the attacker’s opening.

---

The Process – How a New Risk is Born

Today, many new security risks arrive not through sophisticated hacks, but in the form of enticing new “free” apps and tools. The pattern is simple: an attractive, useful interface draws people in; data flows through it; and somewhere along the way, that data is harvested, misused, or sold.

The barrier to entry for creating such a tool has never been lower. With widely available AI services, even someone with minimal technical skill can stand up a convincing, fully functional chatbot in hours. The truly dangerous part? They can ask their own AI assistant for the exact steps and code to do it.

Here’s an example of the kind of harmless-sounding prompt that could set such a project in motion (with sensitive parts redacted):

Prompt: “Help me build a simple chatbot using [REDACTED] that can collect user input, send it to [REDACTED] for processing, and store all user queries and metadata in a database for later analysis.”

No secret hacking knowledge is required — just the willingness to misuse legitimate tools.

---

The Knock-Off Chatbot Kill Chain (MITRE ATT&CK Mapped)

A kill chain is a way of breaking down an attack into its stages, showing how an adversary progresses from the first contact to the final impact. By documenting the steps in sequence, defenders can see where they might detect or disrupt the attack before it succeeds. Here, we use the MITRE ATT&CK framework to map each phase of a hypothetical malicious chatbot operation — from establishing legitimacy to exploiting the harvested data — so the risk is concrete and the defensive opportunities are clear.

Stage 1 – Perceived Harmless (Pre-ATT&CK – Establish Legitimacy)

- Tactic: Reconnaissance (TA0043)

- Build trust with friendly branding and claims of privacy.

- Historical parallel: The Furby — harmless in reality, banned out of caution.

Stage 2 – Resource Development (TA0042)

- Acquire hosting, domains, chatbot front-end, and real LLM API integration.

- MITRE IDs:

T1583.006(Web Services),T1587.001(Malware Development).

Stage 3 – Initial Access (TA0001)

- Drive traffic with ads, SEO, and social channels.

- MITRE IDs:

T1566.002(Spearphishing Link),T1078.004(Valid Accounts).

Stage 4 – Execution (TA0002)

- Log prompts before sending to the LLM.

- MITRE ID:

T1059(Command & Scripting Interpreter).

Stage 5 – Collection (TA0009)

- Store prompts, metadata, and tag sensitive content.

- MITRE IDs:

T1119(Automated Collection),T1005(Data from Local System).

Stage 6 – Exfiltration (TA0010)

- Send enriched logs to attacker-controlled storage.

- MITRE IDs:

T1041(Exfiltration Over C2),T1567(Exfiltration to Cloud Storage).

Stage 7 – Exploitation / Impact (TA0040)

- Use intel for insider trading, corporate espionage, or disinformation.

- MITRE ID:

T1565.001(Data Manipulation).

---

Defender’s Lens – Where to Break the Chain

If you remember nothing else from this article, remember this: every kill chain has choke points where it can be stopped. By focusing on these points, defenders can dramatically reduce the risk of a malicious chatbot operation succeeding.

- Stages 1–3: Vet chatbot domains before allowing them on corporate networks.

- Stages 4–5: Deploy DLP (Data Loss Protection) controls to detect outbound sensitive data.

- Stage 6: Monitor outbound traffic to unknown cloud endpoints.

- Stage 7: Train staff on the risks of unvetted AI tools.

---

Closing Thought

The danger isn’t that someone somewhere could build a malicious chatbot — it’s that anyone, anywhere, could build one before lunch. In 1999, we banned a toy because it might be a spy. In 2025, we pour confidential information into actual, networked systems run by unknown operators — and call it innovation. The Furby wasn’t the problem. Our eroded caution is.