Article Detail

Hallucinations Aren’t Always the Model’s Fault

Sometimes, it’s the UX or us.

Every week I hear someone sigh, “GenAI hallucinated again.” And sure, sometimes the model really does free-associate into nonsense. But just as often, the real culprit isn’t the model—it’s the clunky way we’ve been told to use it.

Take chat UX. Most people (myself included, at times) fall into the trap of keeping everything in one endless thread: work notes, side projects, customer interactions, random shower thoughts. That’s like doing your taxes, journaling about your childhood, and drafting a breakup letter on the same napkin. No wonder the AI gets confused.

The model isn’t misbehaving. It’s just being forced to remix whatever context you hand it. When you give it a soup of different projects and expect clean answers, the soup is the problem. Unless, of course, the UX was itself designed by an LLM—then yes, we’ve gone recursive.

So what can you do about it?

- Start a new chat per project/customer. Treat threads like sandboxes, not junk drawers.

- If you must reuse a thread, prepend a scope-setting line: “From this point on, only consider Customer X. Ignore all prior references.”

- Bonus move: Ask the AI to watch for inconsistencies. You can literally tell it: “If you detect mentions of different customers or contexts, stop and ask me before answering.” That turns silent hallucinations into visible red flags.

The lesson here is simple: don’t blame the LLM for the user interface it was dressed in.

---

For those who want the details

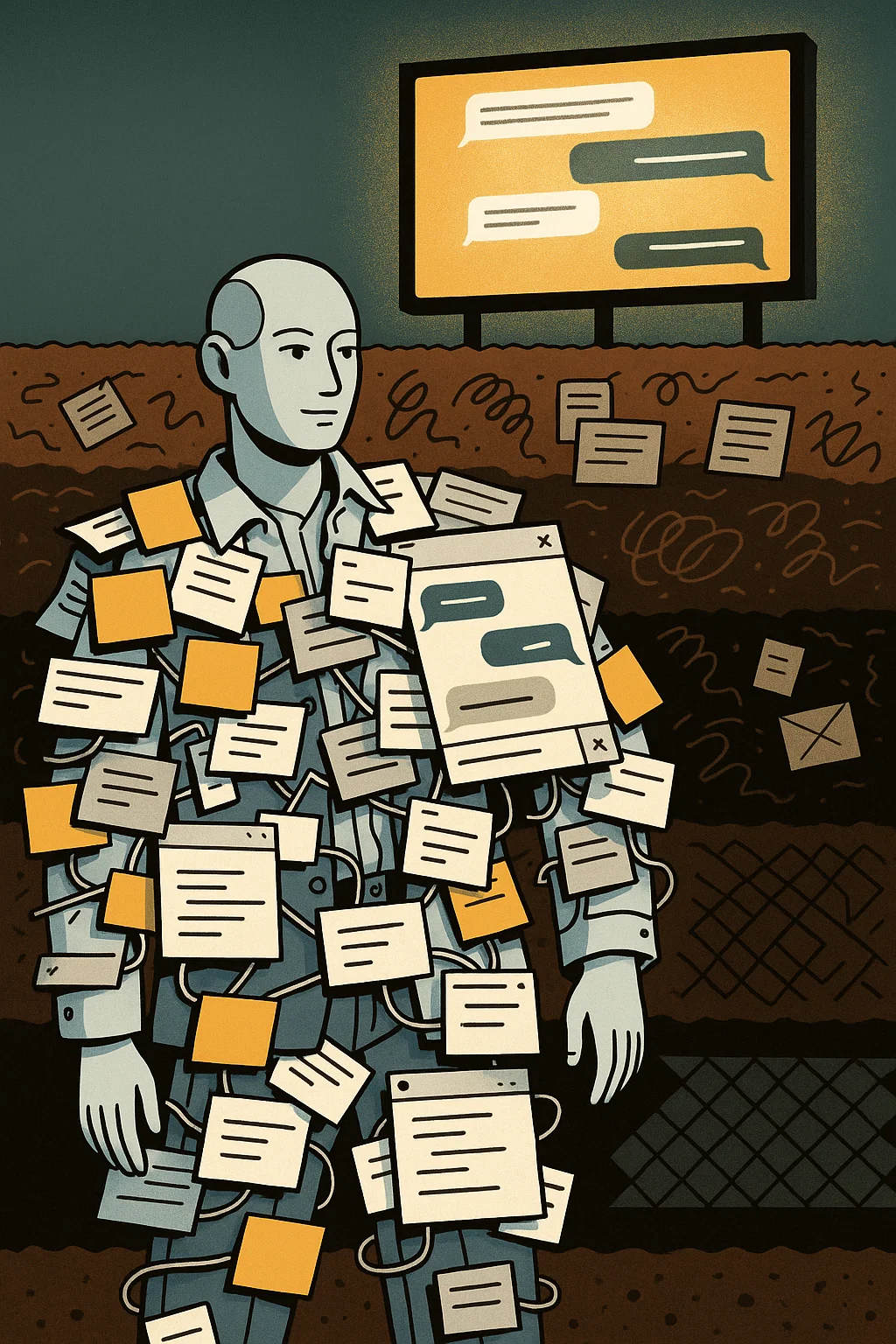

Think of this in layers, like geological strata:

- The LLM layer (bedrock): It’s just a pattern matcher. It doesn’t know “sessions,” it just sees text.

- The memory/context layer (soil): The system decides how much past conversation to shove into the prompt. If you’ve mixed customer A and customer B, both get crammed in.

- The UX layer (billboard on the surface): The chat window makes it look like you can just keep piling on new topics forever. But the deeper layers are blind to “topics.” They only see the blended text.

When people cry “hallucination,” they often blame the bedrock when it was really the billboard that invited the mess.

So, yes—models hallucinate sometimes. But if you’re using the same thread for multiple contexts, you’ve set yourself up for cross-contamination. The real fix isn’t clever prompts, it’s better UX: project-labeled sessions, one-click context wipes, automatic domain detection.

Until then, we’ll keep telling the model “forget everything before this,” like asking a friend to forget an embarrassing story. They’ll try, but traces linger.

---

Call to action: If this resonates, share it with your team. The more people understand that hallucinations are often a UX problem, the faster we’ll push for better design. Let’s stop blaming the models for being dressed in the wrong clothes.