Article Detail

Beyond Automating Bottlenecks

Why the next big win is not just faster workflow, but better work selection

The Context

Organizations automate workflows for good reasons. Faster handoffs, cleaner routing, quicker summaries, fewer manual steps, and better operational telemetry create real value. Automation lowers friction, improves consistency, and generates the data that feeds continuous improvement.

This article is not an argument against automation. We still need it.

But we should be honest about what workflow automation can and cannot do on its own. It can make existing processes move faster. It does not automatically create the larger evolutionary win many teams are hoping for.

Work selection is not a universal answer, and it is not the only strategy worth considering when organizations outgrow older operating models. It is one useful lens, especially when faster workflow is not producing the outcome gains leaders expected.

The Hidden Trap

Many organizations are pushing automation because they are not getting to all of the work on their plate. That pressure is real. Rising alert volumes, growing review backlogs, and too much low-yield coordination work are all signals that the old answer is running out of room.

But in some industries, getting to everything is not attainable and sometimes not desirable. To improve outcomes, we also have to get better at choosing what deserves attention first.

If we automate a constrained workflow without improving how work is selected, we get faster execution of the same allocation logic. That might save time and reduce cost, but it does not guarantee that scarce experts are being directed where they can do the most good.

In the worst case, an organization spends heavily to accelerate throughput, only to use the recovered time on work that was never especially consequential in the first place.

That does not mean every organization should pivot immediately to this lens. It means leaders should stay alert to the possibility that the next meaningful gain may come from a different strategy than the one that worked before.

Why The Old Model Worked For So Long

Corporate inertia is real, but some of it is earned.

The older model was not foolish. For decades, it was close to the right answer. When there were fewer people, fewer customers, fewer transactions, fewer signals, and less constant connectivity, experts could still keep up with most of what mattered. Even when some lower-value work slipped through, the total volume remained manageable.

Manual review, local judgment, and familiar workflows were often enough because the scale was still survivable.

That history matters. In many cases, organizations are not paying for failure. They are paying for success that created more volume, more signals, and more decision demand than older review models were built to absorb.

We are not cleaning up a long-running mistake. We are adapting a successful system to conditions it was never designed to handle forever.

The Real Scarcity Is Judgment

In many organizations, the real scarcity is not labor alone. It is judgment.

What "Work Selection" Means

By work selection, I mean the process of deciding which incoming work deserves scarce human attention, and at what level.

That includes questions such as:

- What should be reviewed immediately?

- What only needs light review?

- What is routine enough to monitor rather than escalate?

- What is unlikely to matter unless additional signals emerge?

Work selection happens before most workflow effort is spent. It is the layer that determines where expert judgment should go first.

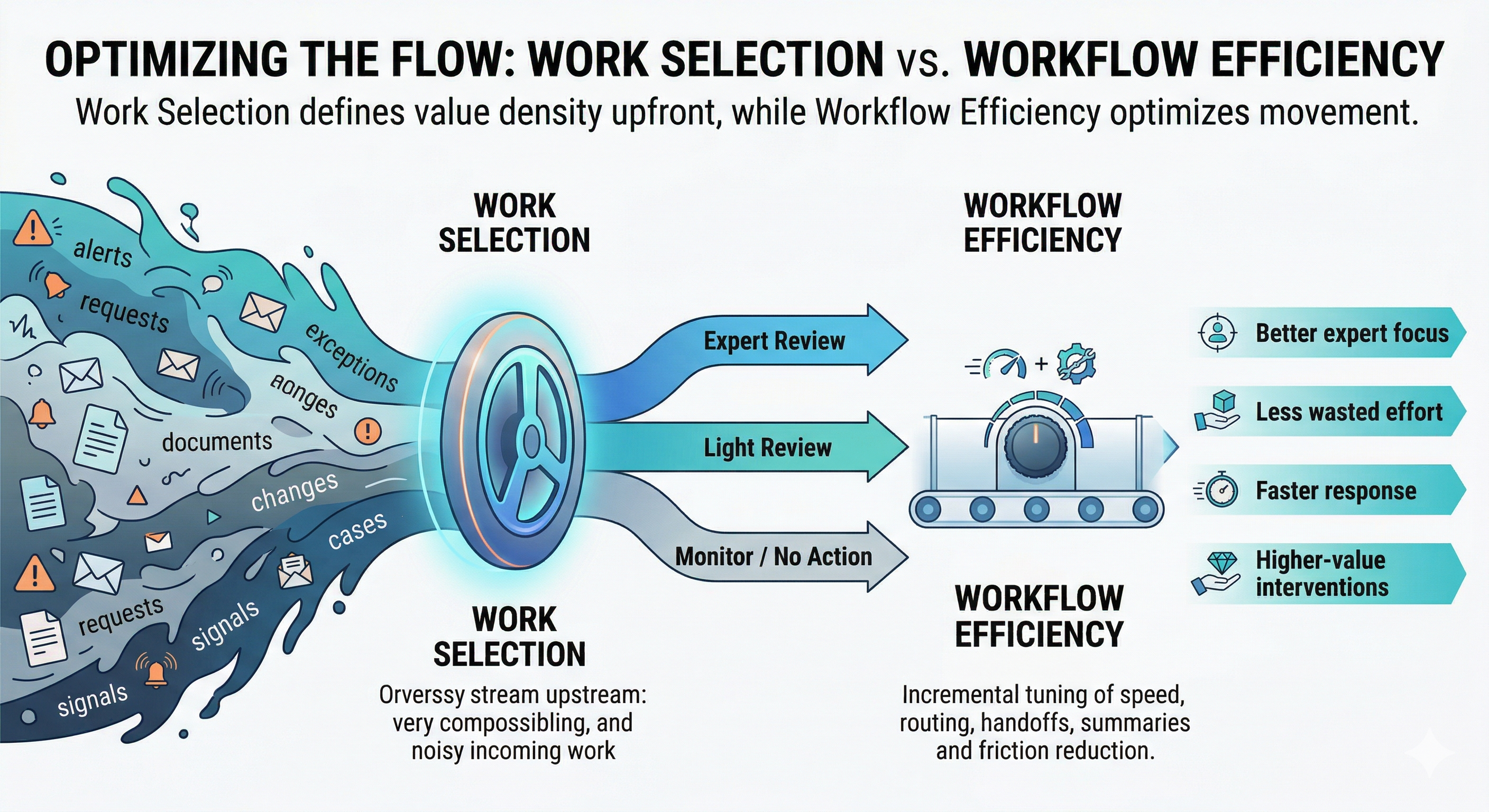

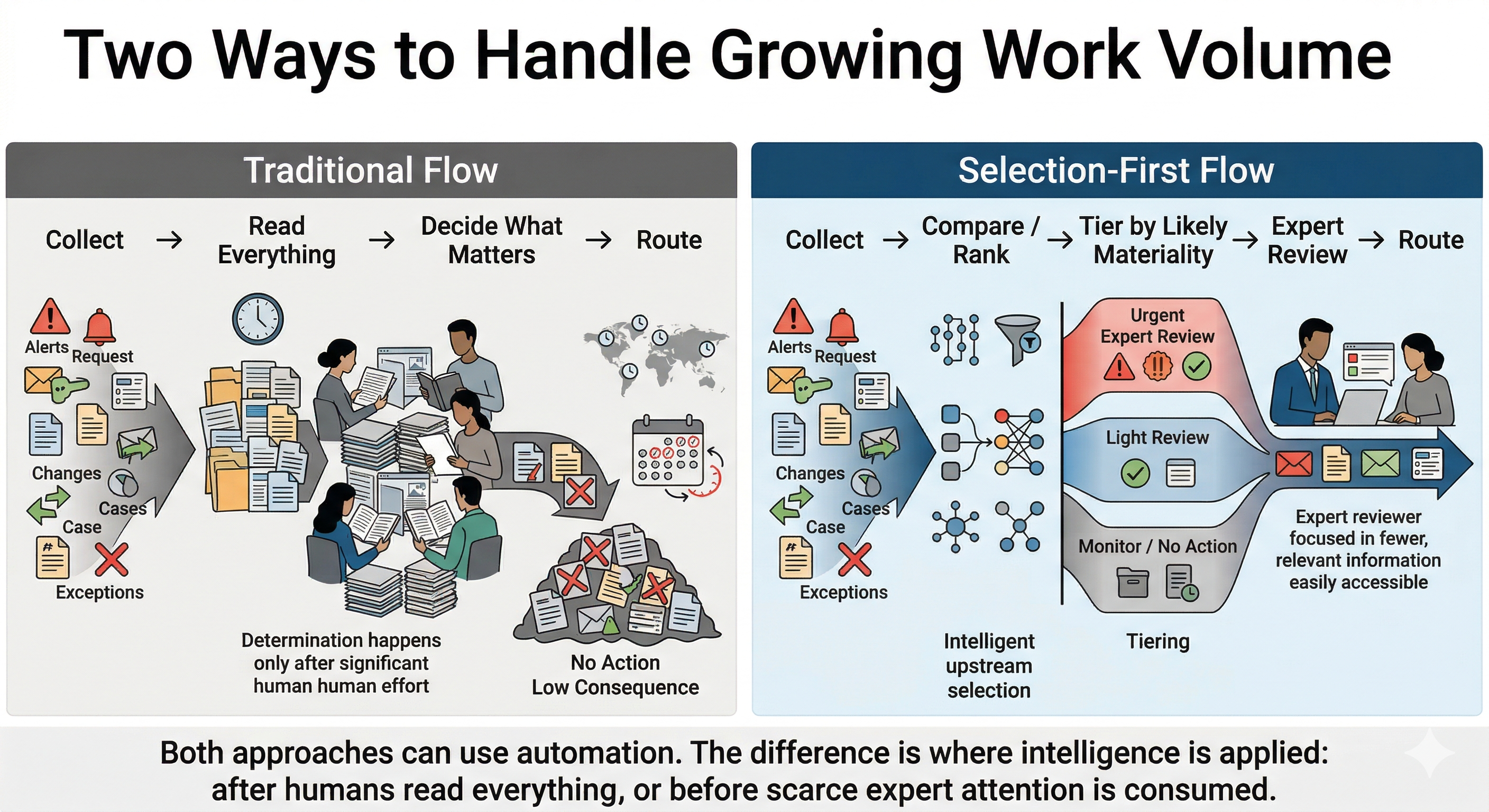

Workflow automation improves how work moves through an existing process. Work selection improves how an organization decides which work should enter expensive human review in the first place.

It is the ability to decide what deserves expert attention first, what only needs light review, what is routine, and where intervention is likely to create the most value. That is a different problem from workflow movement.

Workflow asks, "How do we move work through the pipe faster?"

Judgment asks, "Which work belongs in the pipe at full expert depth in the first place?"

That distinction matters more than many teams realize.

Two Knobs, Not One

This is where many transformation conversations get stuck.

If automation is the little knob, work selection is the big knob.

The little knob tunes workflow efficiency inside the current process. It reduces friction, compresses cycle time, and helps teams move work with less manual effort.

The big knob tunes value. It influences which work receives scarce expert attention at all. It changes the value density of human effort before the workflow even begins.

Maximizing value often requires both.

If we only turn the little knob, we may get a faster system. If we also turn the big knob, we improve the odds that the faster system is spending its energy on the right things.

Why Modern AI Changes The Selection Problem

AI did not create the overload. Scale created the overload.

What modern AI changes is the practical range of selection tasks that can now be done before scarce human attention is consumed.

Older automation, rules engines, and reporting still matter. They work well when conditions are stable, categories are known, and the decision logic can be expressed clearly in advance. They are excellent at handling structured inputs, predictable thresholds, and repeatable routing.

The problem is that many real selection problems are only partly structured. Important work often hides inside messy language, scattered context, subtle change from baseline, weak early signals, and combinations of factors that are hard to capture in static rules.

Modern AI expands what is possible in that gap. It can compare new content to prior content at scale, summarize large volumes of text, surface likely relevance across unstructured sources, detect similarity and novelty, and help rank likely importance even when the signals are diffuse. It can do this continuously, across time zones, and outside the human clock.

That does not eliminate the need for rules, reporting, or human judgment. It changes where those tools can be applied. Instead of waiting for humans to manually read, compare, and narrow the field first, organizations can use AI to reduce the candidate set, compress context, and surface the items most worth expert review.

In simple terms, older automation was strongest at moving work. Modern AI is stronger at helping decide which work is most worth moving through expert attention at full depth.

An Example: Regulatory Change Monitoring

A useful example is regulatory change monitoring across multiple jurisdictions.

In the traditional model, experts spend time checking source sites, reading bulletins, comparing updates to prior guidance, confirming that most changes do not require action, and then routing the few meaningful changes to the right people. That model worked when the volume was lower.

At today's scale, simply automating the current workflow still leaves a lot of value on the table. An organization can automatically collect updates, summarize them, create tickets, and route work faster, but that mostly creates a faster version of the same review queue.

The deeper opportunity is selection.

A more evolved approach continuously monitors sources across time zones, compares new content to prior content, checks whether a change affects the company's actual footprint, and ranks items by likely materiality. It can separate likely no-action items from light-review items and urgent expert-review items before scarce human attention is consumed.

That does not remove humans from the loop. It protects their time for the changes most likely to matter.

This is the difference between accelerating workflow and improving the choice of work. Workflow automation helps move updates faster. Better selection helps experts spend their time on the updates that are actually consequential.

When Work Selection Is The Missing Lever

This idea does not fit everywhere.

In some environments, every item truly does require full review. In others, the larger opportunity is not selection, but better workflow design inside a process that is already appropriately scoped.

A useful thought experiment is to ask six questions.

- Do we have more incoming work than experts can review at full depth? If the answer is yes, selection is already happening, whether the organization admits it or not. The only question is whether it is happening deliberately or informally.

- Does a large share of reviewed work lead to little or no meaningful action? If highly trained people spend substantial time confirming that most items are low consequence, that is a strong signal that better selection may create more value than faster workflow alone.

- Are all items equally important? If some work is clearly more consequential, time-sensitive, risky, or valuable than other work, then there is likely room to improve prioritization before expert effort is spent.

- Can earlier signals help predict what is likely to matter? If source, history, context, similarity, location, business relevance, or other signals can help distinguish likely high-value work from likely low-value work, then selection may be a practical design option.

- Is the process constrained by the human clock? If work piles up overnight, across time zones, or between review cycles, then there may be value in using machines to monitor, compare, rank, and queue work continuously before humans engage.

- Is the current pain mostly about friction, or mostly about focus? If the main pain is duplicate entry, poor routing, manual status updates, or slow handoffs, workflow automation may be the right first move. If the deeper pain is that experts are buried in too much low-value work, selection may be the bigger lever.

If an organization answers yes to most of these questions, then work selection is probably worth serious attention. If not, the right response may still be workflow redesign, better staffing, simpler policy, narrower scope, or some other strategy better suited to the situation.

A Practical Way To Start

For teams that want to explore this seriously, a simple sequence is enough to begin.

Step 1: Map the incoming work

Identify the major categories of incoming work, the source of each, the current review path, and who spends expert time on it.

Step 2: Measure the review yield

Estimate how often full expert review leads to meaningful action. Look for categories where a great deal of effort produces very little change in outcome.

Step 3: Identify the selection signals

List the signals that might help separate likely high-value work from likely low-value work before deep review begins. These may include source type, business relevance, severity indicators, novelty, geography, customer segment, prior history, or change from baseline.

Step 4: Define review tiers

Create simple review levels such as:

- monitor only

- light review

- expert review

- urgent expert review

The goal is not to remove judgment. It is to reserve deep judgment for the work most likely to deserve it.

Step 5: Test in shadow mode

Before changing production workflows, compare the proposed selection logic against real historical or live work. Ask whether the model would have surfaced the right items early, missed anything important, or over-prioritized noise.

Step 6: Measure the right outcomes

Do not measure only cycle time. Also measure whether expert time is shifting toward more consequential work, whether important items are being surfaced earlier, and whether the quality of intervention improves.

Where This Idea Is A Poor Fit

Work selection is less useful when:

- every item truly requires full review,

- the cost of missing even a low-probability item is unacceptable,

- there are too few predictive signals to distinguish important work early,

- or the real bottleneck is plainly procedural rather than judgment-based.

In those cases, classic workflow automation may be the right answer. The broader point is not that every organization should adopt the same playbook. It is that today's environment often rewards a wider range of responses than the past.

Signals That This May Be Worth Exploring Now

Not every organization needs to act immediately. Not every workflow is the right fit. But some signals suggest that it may be worth starting to think in this direction.

You may have a meaningful work-selection opportunity if you are seeing patterns such as:

- rising alert or exception volume,

- growing review backlogs,

- experts spending large amounts of time confirming that most items do not matter,

- recurring complaints that teams are busy but outcomes are not improving proportionally,

- growing dependence on a small number of hard-to-replace experts,

- increasing difficulty keeping up with external change, especially across geographies, schedules, or large unstructured information streams.

None of these signals prove that selection is the answer. But they do suggest that faster workflow alone may not be enough.

Closing: A Celebration, Not A Condemnation

This is not a complaint about how badly organizations performed. It is, in many ways, a recognition of how far they have come.

The older model worked long enough to help create today's scale, complexity, and abundance. We are on the far side of that success. The next step is not to reject the people and systems that brought us here. It is to build on them wisely.

Before we celebrate another faster pipeline, we should ask whether we are improving the flow of work, improving the choice of work, or missing an altogether different lever that today's conditions make more important.

Appendices

Evidence snapshot and limits

What the evidence supports reasonably well

Many organizations are experiencing overload in the form of rising communication volume, interruptions, backlogs, and too much low-yield coordination work.

Newer AI tools can create measurable value in tasks adjacent to summarization, prioritization, triage, and routing, especially where work is high-volume and partly unstructured.

In at least some domains, AI-assisted triage and prioritization can reduce manual review effort, speed up analysis, or improve how quickly high-value items are surfaced.

What remains more inferential

We do not have one universal study proving that work selection matters more than workflow efficiency across all industries.

The stronger claim is narrower: in many knowledge-work environments, faster workflow alone is an incomplete response when the deeper constraint is scarce expert attention.

The case for work selection is strongest where reviewed work often leads to no action, where incoming work exceeds expert capacity, and where earlier signals can help predict likely materiality.

What readers should take from this

This article argues for a useful lens, not a universal law.

Work selection is one of several strategies worth considering when workflow automation alone is not delivering the desired gains.

The practical question is not whether every organization should adopt this approach immediately, but whether this lens reveals hidden leverage in a specific work stream.

Transparency

While ChatGPT, NotebookLM, and Gemini helped the author research, illustrate, and edit this article, the ideas presented here are the author's.

Further Reading

From the Author

From Others

Coming Soon

- A strategic essay on work selection vs workflow automation

- A more applied piece on how AI changes selection and how to implement it